Introduction

It seems that the pace of new technological developments in animal agriculture (and, indeed, in all of life) is increasing and new products and technologies are available every day. Or so it seems! But, how does a producer determine the difference between something that will be truly valuable to his (or her) operation and something that is little more than profit for the vendor.

Two kinds of research

To understand the value of research trials to you, the consumer, it’s important to understand the types of research commonly done in product development. There are generally two categories into which research trials can fall – proof of concept and application. Let’s consider each individually.

Proof of concept research are those studies that prove (or disprove) the value of the new technology under tightly controlled conditions. These studies are generally smaller (i.e., few animals), tightly controlled, using well defined diets, treatments, and measurements. The objectives of this type of research are to determine whether the idea being tested actually works, at what level of application (what dose to feed an animal), and what conditions or measurements will the producer see the response to treatment. Early experiments may use rats, mice or even in vitro or lab based testing to simulate the animal of interest. This allows shorter, faster, and cheaper studies prior to doing studies in calves. Proof of concept studies are usually done prior to application research and are usually done at research institutes or universities.

Application research is conducted after the background work has been done. These studies are usually the on-farm trials conducted under “real world” conditions. This type of work is very important, since there is more variation in on-farm conditions compared to a controlled research trial and these studies can show that the response to treatment is greater than the variation in typical conditions. Application research should be done with the types and numbers of calves that give the producer confidence that s/he can expect a response on his/her operation.

Some items to consider

Here’s a list of 10 items you can consider in evaluating trials presented to you:

- What kind of calves and how many? This sets the stage for the experiment. You should be able to determine from the research data presented to you what kind of calves (breed, sex, age) and how many were used in each treatment. Beware of studies that don’t include the number per treatment! This usually means that the researchers are hiding something.

The FPT status of the calves should be clearly stated, particularly if the study is conducted with milk-fed calves. It’s well known that calves that have failure of passive transfer (FPT, insufficient colostrum consumption) grow more slowly and are less efficient than calves fed colostrum. Be sure you’re aware of the status of the group used in the research.

How many calves is enough in a trial? A very good question. For proof of concept research, a few calves per treatment (10-25) is fine, since these studies are usually more controlled and expensive. However, for application research, a larger number of calves is normally required. The number needed to show an effect (if one exists) depends somewhat on the amount of variability in the operation. Greater numbers of calves does not necessarily mean that the experiment is “better”. Above a certain critical level (which depends on each situation), there is little extra value in adding more calves to an experiment.

Other aspects of management – hutches vs. barn vs. pens, climate, season of the year, etc. can all affect the outcome of research, so these issues should be clearly stated in a research report, also.

Summary

- What was the experimental treatment? What was the experimental product being used in the study and in what amount. Early research trials (e.g., proof of concept) may use prototypes of the final experimental product and in amounts different from the amount recommended on your operation. Unfortunately, sometimes unscrupulous individuals or companies may conduct research at a given level of a product (say, 10 grams of product per day) which shows a significant response. But, then they recommend feeding only 6 grams of product per day because 10 grams (the functional dose) is too expensive to be used on the farm. Be aware of dosages!

- What was the liquid diet? If preweaned calves are used in the study, be sure you know whether whole milk, waste milk or milk replacer was fed; how much was fed and for how long.

- What was the solid diet? Were calves offered dry feed? If so, what? How much? What was the nutrient content of the feed? Variation among experiments here can be surprising. Some researchers may feed no dry feed to calves fed products intended to be added to milk replacers, for example. Others may limit the amount of dry feed to show greater differences among treatments. While this may be justified in proof of concept research, application (on-farm) research should be done under conditions similar to that of good, modern calf management.

- Were there experimental controls? Was there a negative control in the study? A negative control is the group of animals that would be fed and managed under normal conditions. If a dietary additive is the experimental treatment, the experimental controls would be the group that would NOT be fed that additive. A common way of “cheating” in research is to only compare an experimental product to a positive control. For example, consider an additive to milk replacer that is purported to be a replacement for antibiotics. It might be tempting to simply feed two treatments – a group of calves fed the experimental product and a group fed antibiotics. If there is no difference between the experimental product and the antibiotic, the research may claim that the product can “replace” antibiotics. This is unreasonable and incorrect – it may simply have been the case that there was too much variation in the study to be able to show a difference between treatments.

- What was measured? The measurements that you will evaluate should have both biological and economic significance to you. It should also be something that you are currently measuring on your operation to determine whether or not the change you make is truly effective. Important measures for calves may include growth rates (average daily gain), feed efficiency, cost of feed consumed, efficiency of IgG absorption (for colostrum feeding studies), body composition (height, weight, hip width may be used to estimate composition), age at weaning, breeding and calving, and of course, future milk production.

- How long were the calves monitored? Make sure you’re comfortable with the length of the study. The number of weeks or months the study was done should be clearly stated.

- Were the measurements “normal”? In their eternal quest to report statistically significant differences (for more information on statistical significance, see Calf Note #127), researchers sometimes lose sight of the absolute values they report. It’s important that the values reported are typical of modern production – for example, would you consider a study as biologically valid if calves at 56 days of age weighed less than 150 lbs (68 kg)? Or if the average age at weaning for a group of calves was 28 days when you normally wean your calves at 60 or 90 days?

Beware of data that is expressed as a “percent of control”! For example, let’s say that you are presented information that shows that calves fed a new product grew 15% faster than control calves. This should be an immediate “red flag”. Usually, data are presented as a percent of controls when the researchers don’t want to you to see the absolute numbers. It could be that calves fed the new product grew at 115 grams per day versus control calves that grew at 100 grams per day during the experiment. While this is a 15% increase, these are very slow rates of growth and not representative of what would be considered normal on any operation. - What was the variation? In tables or figures, there should be some indication of the variation around the mean (for more information about variation, see Calf Note #127). A well designed study should make available either a standard deviation or standard error as well as the probability of a significant difference (the “P” value). Too many studies that I’ve seen are simply a comparison of the averages of control versus treatment with NO statistical evaluation of the data. These research studies are useless.

- What statistical design? There are many ways to organize research studies. Some of the most common are called “completely randomized” or “randomized complete block” designs. It’s not necessary to have a complete knowledge of the inner workings of statistics to determine if the research in question is valuable. Here are a few most common problems with designs.

No randomization. Most experimental designs require that animals be assigned randomly to treatment. Under controlled experiments, researchers have sophisticated programs that provide randomization schemes for assigning animals to treatment. Randomization is one of the most fundamental aspects of most statistical designs and violating this rule (e.g., by assigning Holsteins to one treatment and Jerseys to the other treatment, or all cows fed experimental product in July followed by all cows fed control diet in August) is a huge red flag for any trial.

There are several ways to “cheat” the rules of statistics – either in error or purposely. Here are a couple of common examples of “cheating” that I’ve seen in the industry.

Cheating version #1. To perform a statistical evaluation, at least two observations per treatment are required. So, if you have a control vs. an experimental product, you need at least two calves (or two pens of calves, see below) for the control group and two for the experimental product. Too many trials are conducted that compare one pen of animals (the control) versus one pen of animals that were fed the experimental product. NO!!! This does not allow any measure of variation or any statistical measurement. I believe that trials comparing is not research.

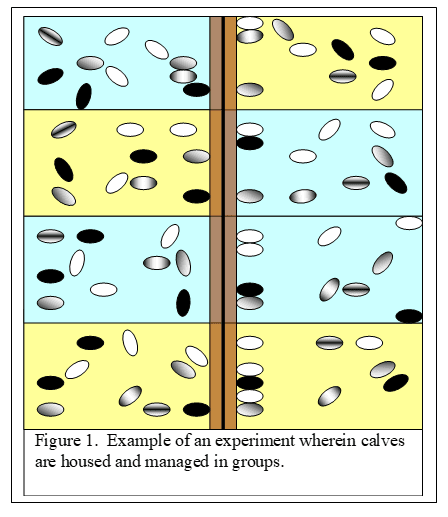

Cheating version #2. There is another very common way of “cheating” in research studies where calves are fed different dietary treatments. This occurs when calves are housed, managed and fed in groups (say 10 calves per pen) but the researchers assume that each calf is managed and fed as a separate animal. According to statistical “rules”, if animals are managed in groups, the analysis has to be done on the pen, not the animal.

In figure 1, there are eight pens (alternating blue and yellow) each containing 10 calves per pen. The blue pens are fed the diet containing the control treatment and the yellow pens are fed the diet with the experimental product. Let’s say that the researcher wants to determine whether the experimental product improves growth of the calves from 28 days of life to 100 days of life. So, at the beginning of the study, he weighs all calves and gets 80 body weights. He does the same at 100 days and calculates the total body weight gain for each calf. Here’s the important point. These calves were housed and managed in groups (pens) instead of individually. According to the “statistical rules”, the researcher has to calculate the average growth rate of each pen and use this average in his statistical analysis. So, instead of 80 observations, he uses eight observations. This makes it more difficult to report a statistically significant difference. That’s why it’s so tempting to “cheat” and use the 80 body weights. Unfortunately, this occurs quite commonly, particularly in application research.

Getting a handle on new technologies that come to the market requires some understanding of how the research is done to test these technologies. The above checklist can help you evaluate how these new technologies apply to your operation and how likely they will be to providing a positive economic return. Best of luck!